I intend to contact them officially for my next research in order to test that, but I need to modify the way results are presented to ensure that next time, there won't be any significant time differences for any vendor… which means I have to do some infrastructure work upfront.14:18:14,563 2292 - XmlConfiguration is now operational I know from rumors that in 1.4 they also implemented a relatively rare feature that is only implemented by 4 other vendors (privilege escalation checks – which is a feature that as far as I know, is only implemented by appscan, WebInsepct, cenzic hailstorm and NTOSpider).Īlthough 4 months are more than enough time to implement significant changes to the tested vulnerability detection mechanisms, I don't want to guess or estimate things I didn't manually test myself. I didn't manage to test Burp 1.4 the version tested was 1.3.09, and 1.4 was released less than two months ago (), about two months after I finished testing 1.3.09 (again, one of two vendors that had a newer version that wasn't tested – the other one being cenzic).

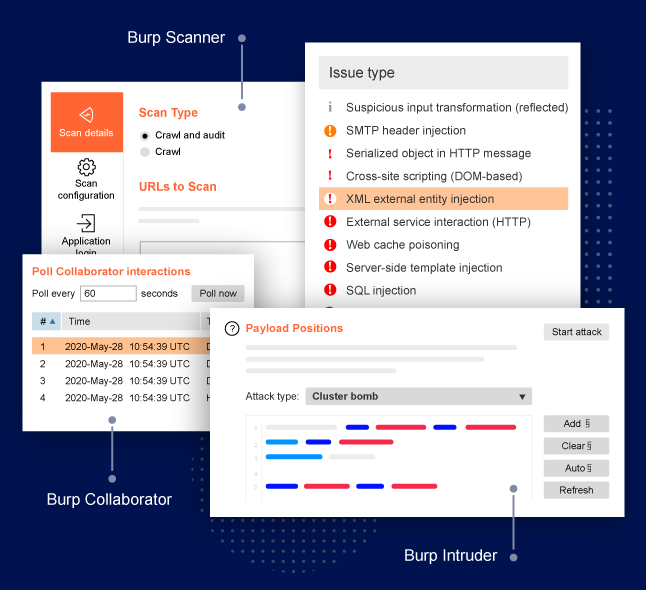

I believe that if any of the other tools would have behaved in a similar manner, I would have found out, and place it under the same category (which isn't a punishment, just a classification).īut I did my best, and I did try to detect these issues, as well as a variety of others. In many cases, the tools "spike proxy" and "Lilith" sent payloads that could not have confirmed vulnerabilities that were reported, and in the case of spike proxy (a tool from 2003), nearly every page was found vulnerable to every plugin the tool had.Īs a result, I decided not to include these tools in the benchmark (due to the absence of a logical detection algorithm), and instead, placed them under the category of "de-facto fuzzers" (since they provide the user with leads, without performing verifications themselves), found in Appendix B. Whenever I tested a tool, I also analyzed its communication (using burp/wireshark), and the payloads it was sending in order to conclude something was vulnerable (assuming its license permitted it).

Well, in my opinion, there's a difference between a scanner with a high ratio of false positives (something I let the user's decide whether or not to use), and a scanner that reports everything as vulnerable, and two good examples of explaining this scenario are the cases of the tools *Lilith" and "Spike Proxy". It's related to the position of scanners in the benchmark, and the fact that currently, false positives do not "lower" the position of a scanner in the chart (even though the chart still presents the amount of false positives separately).Ī potential flaw in this scoring mechanism was raised by since in this method, any scanner that reports 100% percent of the pages it scans as vulnerable will be ranked 1st, even if it's false positive ratio is also 100%. There's a good subject discussed by and that I'd like to discuss further.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed